Explainable AI to ensure trust in clinical Decision Support Systems

Challenge

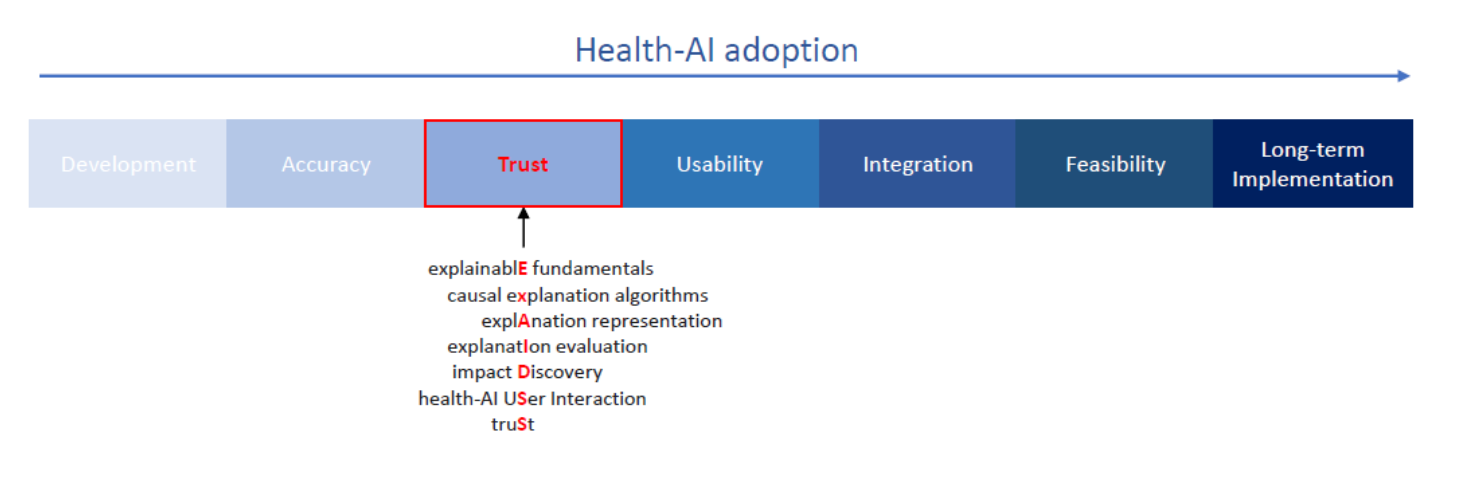

AI systems are increasingly being developed to support clinical decisions, but adoption in healthcare remains limited when clinicians and patients cannot understand or trust how recommendations are produced. In safety-critical settings such as medicine, accuracy alone is not enough. AI systems also need to be transparent, accountable, and usable in practice. ExAIDSS was established to address this gap by asking a fundamental question: what makes an explanation of health AI actually useful and trustworthy for real users?

Approach

ExAIDSS (Explainable AI to ensure trust in clinical Decision Support Systems) is a five-year Royal Academy of Engineering fellowship led by Dr Evangelia Kyrimi. The project develops explainable AI for healthcare by combining causal modelling, explanation design, and evaluation. Its research programme includes: identifying the fundamentals of a “good” explanation; developing causal explanation algorithms; designing explanations for different users and decision contexts; creating protocols for evaluating explanations; and integrating these methods into healthcare digital platforms.

A key strand of the project has been to establish stronger conceptual foundations for explainability in health AI. In 2025, the team published work in AI and Ethics proposing a definition of explanation in health AI and a comprehensive set of attributes for what counts as a good explanation, drawing on literature and expert input through a Delphi study. Earlier work also introduced a process for evaluating explanations for transparent and trustworthy AI prediction models, helping move the field beyond ad hoc assessment.

Alongside research publications, ExAIDSS has contributed to research community development through workshops and dissemination activities, including Causal XAI events and public-facing writing on why AI explanations matter.

Impact

ExAIDSS is influencing how explainability in health AI is defined, assessed, and translated into practice. Its work helps move the field from vague calls for “transparent AI” toward concrete standards for explanation quality and evaluation. In doing so, it supports the long-term adoption of clinical AI systems that are not only accurate but understandable, contestable, and fit for real-world healthcare decision-making.